Tensorboard可视化:基于LeNet5进行面部表情分类

面部表情分类

面部表情是面部肌肉的一个或多个动作或状态的结果。这些运动表达了个体对观察者的情绪状态。面部表情是非语言交际的一种形式。它是表达人类之间的社会信息的主要手段,不过也发生在大多数其他哺乳动物和其他一些动物物种中。人类的面部表情至少有21种,除了常见的高兴、吃惊、悲伤、愤怒、厌恶和恐惧6种,还有惊喜(高兴+吃惊)、悲愤(悲伤+愤怒)等15种可被区分的复合表情。

面部表情识别技术主要的应用领域包括人机交互、智能控制、安全、医疗、通信等领域。

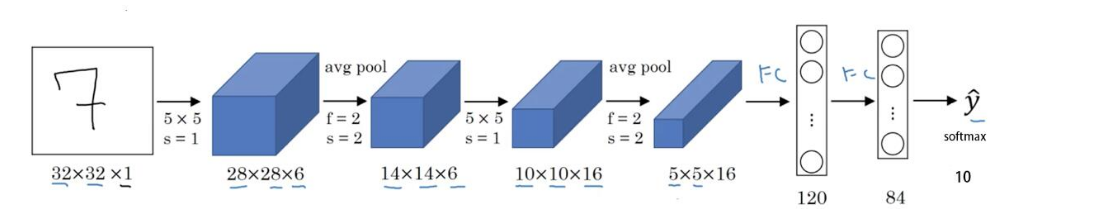

网络架构

LeNet-5出自论文Gradient-Based Learning Applied to Document Recognition,是一种用于手写体字符识别的非常高效的卷积神经网络。LeNet5的网络架构如下:

但是因为我们要做的是面部表情分类,而且CK+数据集样本大小是48*48,因此需要对LeNet5网络进行微调。网络架构如下:

网络结构如下:

计算图如下:

代码实现

预处理

数据集加载,并进行预处理,同时将测试集的前225张样本拼接成15张*15张的大图片,用于Tensorboard可视化。

%matplotlib inline

import matplotlib.pyplot as plt

import os

import cv2

import numpy as np

from tensorflow import name_scope as namespace

from tensorflow.contrib.tensorboard.plugins import projector

NUM_PIC_SHOW=225

base_filedir='D:/CV/datasets/facial_exp/CK+'

dict_str2int={'anger':0,'contempt':1,'disgust':2,'fear':3,'happy':4,'sadness':5,'surprise':6}

labels=[]

data=[]

#读取图片并将其保存至data

for expdir in os.listdir(base_filedir):

base_expdir=os.path.join(base_filedir,expdir)

for name in os.listdir(base_expdir):

labels.append(dict_str2int[expdir])

path=os.path.join(base_expdir,name)

path=path.replace('\\','/') #将\替换为/

img = cv2.imread(path,0)

data.append(img)

data=np.array(data)

labels=np.array(labels)

#将data打乱

permutation = np.random.permutation(data.shape[0])

data = data[permutation,:,:]

labels = labels[permutation]

#取前225个图片拼成一张大图片,用于tensorboard可视化

img_set=data[:NUM_PIC_SHOW]#前225的数据用于显示

label_set=labels[:NUM_PIC_SHOW]

big_pic=None

index=0

for row in range(15):

row_vector=img_set[index]

index+=1

for col in range(1,15):

img=img_set[index]

row_vector=np.hstack([row_vector,img])

index+=1

if(row==0):

big_pic=row_vector

else:

big_pic=np.vstack([big_pic,row_vector])

plt.imshow(big_pic, cmap='gray')

plt.show()

#写入大图片

cv2.imwrite("D:/Jupyter/TensorflowLearning/facial_expression_cnn_projector/data/faces.png",big_pic)

#转换数据格式和形状

data=data.reshape(-1,48*48).astype('float32')/255.0

labels=labels.astype('float32')

#0.3的比例测试

scale=0.3

test_data=data[:int(scale*data.shape[0])]

test_labels=labels[:int(scale*data.shape[0])]

train_data=data[int(scale*data.shape[0]):]

train_labels=labels[int(scale*data.shape[0]):]

print(train_data.shape)

print(train_labels.shape)

print(test_data.shape)

print(test_labels.shape)

#将标签one-hot

train_labels_onehot=np.zeros((train_labels.shape[0],7))

test_labels_onehot=np.zeros((test_labels.shape[0],7))

for i,label in enumerate(train_labels):

train_labels_onehot[i,int(label)]=1

for i,label in enumerate(test_labels):

test_labels_onehot[i,int(label)]=1

print(train_labels_onehot.shape)

print(test_labels_onehot.shape)

2.定义前向网络

import tensorflow as tf

IMAGE_SIZE=48 #图片大小

NUM_CHANNELS=1 #图片通道

CONV1_SIZE=5

CONV1_KERNEL_NUM=32

CONV2_SIZE=5

CONV2_KERNEL_NUM=64

FC_SIZE=512 #隐层大小

OUTPUT_NODE=7 #输出大小

#参数概要,用于tensorboard实时查看训练过程

def variable_summaries(var):

with namespace('summaries'):

mean=tf.reduce_mean(var)

tf.summary.scalar('mean',mean) #平均值

with namespace('stddev'):

stddev=tf.sqrt(tf.reduce_mean(tf.square(var-mean)))

tf.summary.scalar('stddev',stddev) #标准差

tf.summary.scalar('max',tf.reduce_max(var))#最大值

tf.summary.scalar('min',tf.reduce_min(var))#最小值

tf.summary.histogram('histogram',var)#直方图

#获取权重

def get_weight(shape,regularizer,name=None):

w=tf.Variable(tf.truncated_normal(shape,stddev=0.1),name=name)

#variable_summaries(w)

if(regularizer!=None):

tf.add_to_collection('losses',tf.contrib.layers.l2_regularizer(regularizer)(w))

return w

#获取偏置

def get_bias(shape,name=None):

b=tf.Variable(tf.zeros(shape),name=name)

#variable_summaries(b)

return b

#定义前向网络

def forward(x,train,regularizer):

with tf.name_scope('layer'):

#把输入reshape

with namespace('reshape_input'):

x_reshaped=tf.reshape(x,[-1,IMAGE_SIZE,IMAGE_SIZE,NUM_CHANNELS])

with tf.name_scope('conv1'):

#定义两个卷积层

conv1_w=get_weight([CONV1_SIZE,CONV1_SIZE,NUM_CHANNELS,CONV1_KERNEL_NUM],regularizer=regularizer,name='conv1_w')

conv1_b=get_bias([CONV1_KERNEL_NUM],name='conv1_b')

conv1=tf.nn.conv2d(x_reshaped,conv1_w,strides=[1,1,1,1],padding='SAME')

relu1=tf.nn.relu(tf.nn.bias_add(conv1,conv1_b))

pool1=tf.nn.max_pool(relu1,ksize=[1,2,2,1],strides=[1,2,2,1],padding='SAME')

with tf.name_scope('conv2'):

conv2_w=get_weight([CONV2_SIZE,CONV2_SIZE,CONV1_KERNEL_NUM,CONV2_KERNEL_NUM],regularizer=regularizer,name='conv2_w')

conv2_b=get_bias([CONV2_KERNEL_NUM],name='conv2_b')

conv2=tf.nn.conv2d(pool1,conv2_w,strides=[1,1,1,1],padding='SAME')

relu2=tf.nn.relu(tf.nn.bias_add(conv2,conv2_b)) #对卷机后的输出添加偏置,并通过relu完成非线性激活

pool2=tf.nn.max_pool(relu2,ksize=[1,2,2,1],strides=[1,2,2,1],padding='SAME')

with tf.name_scope('flatten'):

#定义reshape层

pool_shape=pool2.get_shape().as_list() #获得张量的维度并转换为列表

nodes=pool_shape[1]*pool_shape[2]*pool_shape[3] #[0]为batch值,[1][2][3]分别为长宽和深度

#print(type(pool2))

reshaped=tf.reshape(pool2,[-1,nodes])

with tf.name_scope('fc1'):

#定义两层全连接层

fc1_w=get_weight([nodes,FC_SIZE],regularizer,name='fc1_w')

fc1_b=get_bias([FC_SIZE],name='fc1_b')

fc1=tf.nn.relu(tf.matmul(reshaped,fc1_w)+fc1_b)

if(train):

fc1=tf.nn.dropout(fc1,0.5)

with tf.name_scope('fc2'):

fc2_w=get_weight([FC_SIZE,OUTPUT_NODE],regularizer,name='fc2_w')

fc2_b=get_bias([OUTPUT_NODE],name='fc2_b')

y=tf.matmul(fc1,fc2_w)+fc2_b

return y

3.定义反向传播 ,可视化设置,并进行训练,

BATCH_SIZE=100 #每次样本数

LEARNING_RATE_BASE=0.005 #基本学习率

LEARNING_RATE_DECAY=0.99 #学习率衰减率

REGULARIZER=0.0001 #正则化系数

STEPS=2500 #训练次数

MOVING_AVERAGE_DECAY=0.99 #滑动平均衰减系数

SAVE_PATH='.\\facial_expression_cnn_projector\\' #参数保存路径

data_len=train_data.shape[0]

#将拼接为big_pic的测试样本保存至标量,用于训练过程可视化

pic_stack=tf.stack(test_data[:NUM_PIC_SHOW]) #stack拼接图片张量

embedding=tf.Variable(pic_stack,trainable=False,name='embedding')

if(tf.gfile.Exists(os.path.join(SAVE_PATH,'projector'))==False):

tf.gfile.MkDir(os.path.join(SAVE_PATH,'projector'))

#创建metadata文件,存放可视化图片的label

if(tf.gfile.Exists(os.path.join(SAVE_PATH,'projector','metadata.tsv'))==True):

tf.gfile.DeleteRecursively(os.path.join(SAVE_PATH,'projector'))

tf.gfile.MkDir(os.path.join(SAVE_PATH,'projector'))

#将可视化图片的标签写入

with open(os.path.join(SAVE_PATH,'projector','metadata.tsv'),'w') as f:

for i in range(NUM_PIC_SHOW):

f.write(str(label_set[i])+'\n')

with tf.Session() as sess:

with tf.name_scope('input'):

#x=tf.placeholder(tf.float32,[BATCH_SIZE,IMAGE_SIZE,IMAGE_SIZE,NUM_CHANNELS],name='x_input')

x=tf.placeholder(tf.float32,[None,IMAGE_SIZE*IMAGE_SIZE*NUM_CHANNELS],name='x_input')

y_=tf.placeholder(tf.float32,[None,OUTPUT_NODE],name='y_input')

#reshape可视化图片

with namespace('input_reshape'):

image_shaped_input=tf.reshape(x,[-1,IMAGE_SIZE,IMAGE_SIZE,1]) #把输入reshape

tf.summary.image('input',image_shaped_input,7) #添加到tensorboard中显示

y=forward(x,True,REGULARIZER)

global_step=tf.Variable(0,trainable=False)

with namespace('loss'):

#softmax并计算交叉熵

ce=tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y,labels=tf.argmax(y_,1))

cem=tf.reduce_mean(ce) #求每个样本的交叉熵

loss=cem+tf.add_n(tf.get_collection('losses'))

tf.summary.scalar('loss',loss) #loss只有一个值,就直接输出

learning_rate=tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

data_len/BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True

)

with namespace('train'):

train_step=tf.train.GradientDescentOptimizer(learning_rate).minimize(loss,global_step=global_step)

ema=tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY,global_step)

ema_op=ema.apply(tf.trainable_variables())

with namespace('accuracy'):

correct_prediction=tf.equal(tf.argmax(y,1),tf.argmax(y_,1))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

tf.summary.scalar('accuracy',accuracy)

with tf.control_dependencies([train_step,ema_op]):

train_op=tf.no_op(name='train')

init_op=tf.global_variables_initializer()

sess.run(init_op)

#合并所有的summary

merged=tf.summary.merge_all()

#写入图结构

writer=tf.summary.FileWriter(os.path.join(SAVE_PATH,'projector'),sess.graph)

saver=tf.train.Saver() #保存网络的模型

#配置可视化

config=projector.ProjectorConfig() #tensorboard配置对象

embed=config.embeddings.add() #增加一项

embed.tensor_name=embedding.name #指定可视化的变量

embed.metadata_path='D:/Jupyter/TensorflowLearning/facial_expression_cnn_projector/projector/metadata.tsv' #路径

embed.sprite.image_path='D:/Jupyter/TensorflowLearning/facial_expression_cnn_projector/data/faces.png'

embed.sprite.single_image_dim.extend([IMAGE_SIZE,IMAGE_SIZE])#可视化图片大小

projector.visualize_embeddings(writer,config)

#断点续训

#ckpt=tf.train.get_checkpoint_state(MODEL_SAVE_PATH)

#if(ckpt and ckpt.model_checkpoint_path):

# saver.restore(sess,ckpt.model_checkpoint_path)

for i in range(STEPS):

run_option=tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

run_metadata=tf.RunMetadata()

start=(i*BATCH_SIZE)%(data_len-BATCH_SIZE)

end=start+BATCH_SIZE

summary,_,loss_value,step=sess.run([merged,train_op,loss,global_step],

feed_dict={x:train_data[start:end],y_:train_labels_onehot[start:end]},

options=run_option,

run_metadata=run_metadata)

writer.add_run_metadata(run_metadata,'step%03d'%i)

writer.add_summary(summary,i)#写summary和i到文件

if(i%100==0):

acc=sess.run(accuracy,feed_dict={x:test_data,y_:test_labels_onehot})

print('%d %g'%(step,loss_value))

print('acc:%f'%(acc))

saver.save(sess,os.path.join(SAVE_PATH,'projector','model'),global_step=global_step)

writer.close()

可视化训练过程

执行上面的代码,打开tensorboard,可以看到训练精度和交叉熵损失如下:

由于只有六百多的训练样本,故得到曲线抖动很大,训练精度大概在百分之八九十多浮动,测试精度在百分之七八十浮动,可见精度不高。下面使用Tensorboard将训练过程可视化(图片是用Power Point录频 然后用迅雷应用截取gif得到的):

————————————————

版权声明:本文为CSDN博主「陈建驱」的原创文章,遵循 CC 4.0 BY-SA 版权协议,转载请附上原文出处链接及本声明。